by

John R. Fischer, Senior Reporter | May 15, 2018

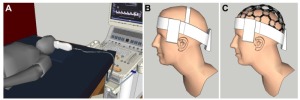

Researchers are building a helmet

-shaped interface for ultrasound imaging

of the brain

Ultrasound imaging of the brain may soon be a reality simply by donning a helmet.

Researchers at Vanderbilt University are customizing an AI-equipped, hat-shaped interface integrating ultrasound and electroencephalogram technology for real-time imaging and to determine how actions and emotions stimulate different parts of the cranial organ, so that people may use their thoughts to direct software and robotics in performing a variety of tasks.

“We would like to have imaging to support our goal of real-time assessment of brain function – think fMR,” Brett Byram, assistant professor of biomedical engineering, told HCB News. “As part of our effort to get at brain function, we are integrating EEG with the ultrasound because the EEG provides complementary information to the functional ultrasound information. If we can create reliable ultrasound images of the brain, that in itself will become a broadly useful end goal.”

Ad Statistics

Times Displayed: 2130

Times Visited: 4 Stay up to date with the latest training to fix, troubleshoot, and maintain your critical care devices. GE HealthCare offers multiple training formats to empower teams and expand knowledge, saving you time and money.

Brain imaging is not possible with ultrasound due to the skull’s acoustic impedance differing from that of the soft tissue inside it and the fact that ultrasound waves are reflected based on the acoustic impedance mismatch of adjacent tissues. This ultimately causes beams to bounce around inside the skull.

Though the object is not for ultrasound to replace other imaging modalities, limitations such as expenses, radiation and limited portability present challenges to providers in acquiring and utilizing them.

The integration of EEG will enable clinicians to view brain perfusion and observe which parts of the brain are stimulated by certain emotions, actions or movements.

It also, at base level, could potentially be used to produce images that match the clarity of those of the heart and womb, and would use artificial intelligence to account for distortions and deliver workable images.

Byram says the technology could be used for real-time imaging during surgery, as well as to assist a variety of patients, such as an ALS patient with limited mobility, in performing different tasks.

In using the helmet, the individual with ALS could direct a robotic arm to retrieve a glass of water based on the technology detection of the thought from their blood flow and the EEG information.

Bryam further notes that the cost advantages of both ultrasound and EEG could enhance the presence of value-based care in different healthcare settings.

“Ultrasound and EEG are both low-cost modalities,” he said. “If the same outcome – diagnostic or otherwise – can be achieved with these, then that's a win for value-based healthcare.”

Byram is currently designing the helmet with Leon Bellan, assistant professor of mechanical engineering and biomedical engineering, and Michael Miga, Harvie Branscomb Professor and professor of biomedical engineering, radiology and neurological surgery, with plans to bring additional medical center physicians on board as the work progresses.

Project development is funded by a $550,000 National Science Foundation Faculty Early Career Development grant.