by

John R. Fischer, Senior Reporter | June 22, 2018

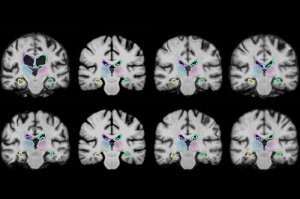

A new algorithm records and applies

information from past medical image

registrations to new ones

Hours spent registering pairs of medical images may soon be reduced to minutes with the development of an algorithm that “learns” how to perform the task while doing it.

Researchers at the Massachusetts Institute of Technology (MIT) have released two papers on the development of VoxelMorph, a machine-learning tool that acquires information on how to align images and estimates optimal alignment parameters while registering thousands of brain scans and other 3D images more than 1,000 times faster than conventional learning techniques.

"The tasks of aligning a brain MR shouldn't be that different when you're aligning one pair of brain MRs or another," co-author of both papers Guha Balakrishnan, a graduate student in MIT's computer science and artificial intelligence laboratory (CSAIL) and department of engineering and computer science (EECS), said in a statement. "There is information you should be able to carry over in how you do the alignment. If you're able to learn something from previous image registration, you can do a new task much faster and with the same accuracy."

Ad Statistics

Times Displayed: 6368

Times Visited: 20 Brand-New FDA-cleared Advanced Ultrasound Medical Device available for sale or lease to Wound Care Centers or any other Medical Facilities.The Arobella 1000D is designed for non-contact or debridement ultrasound wound healing therapy, or any other wounds

The task of aligning voxels in one volume of MR scans with a second is time-consuming and becomes computationally more complex due to the different machines used to produce each scan and the different spatial orientations. Analysis of scans from large populations furthers the time in this process to potentially hundreds of hours depending on the number of patients.

The process continues to be long, even with the deployment of algorithms that delete all data on the location of voxels once the task is complete, forcing them to start from scratch when registering a new pair of images.

Powered by a convolutional neural network, VoxelMorph processes images and other information across several layers of computation and has been trained on 7,000 publicly available MR brain scans fed into the algorithm in pairs.

The algorithm uses its CNN and a spatial transformer to capture and learn similarities of voxels in one MR scan with those of another, such as anatomical shapes common to both scans, and to calculate optimized parameters that can be applied to any pair of images.

It does so using a simple mathematical function when fed two scans, requiring no additional information beyond image data or the running of a traditional algorithm first to maintain its accuracy.

Once training was complete, researchers tested their creation on 250 additional brain scans, gaining results within two minutes on a traditional central processing unit and in under one second with a graphics processing unit.

In addition to brain scans, the algorithm could be applied in a variety of ways and is currently being used to assess lung images. It could even offer image registration during operations in near-real time, enabling surgeons to have a much better picture of their progress and not have to repeat operations as often, such as when resectioning brain tumors, saving patients’ time and expense.

The findings were presented at the Conference on Computer Vision and Pattern Recognition (CVPR) and will be a second time at the Medical Image Computing and Computer Assisted Interventions Conference (MICCAI) in September.

MIT researchers did not respond for comment.