by

John R. Fischer, Senior Reporter | August 15, 2019

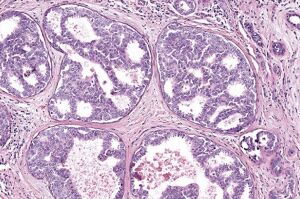

A new AI solution can distinguish

between hard-to-read pathologies,

such as DCIS and atypia.

U.S. researchers have developed a new AI solution to help pathologists distinguish between complex biopsies when diagnosing breast cancer.

The system can interpret images of breast cancer diagnoses that are challenging to identify with the human eye as accurately — or even better — than experienced pathologists. It especially is useful in classifying ductal carcinoma in situ (a noninvasive type of breast cancer known as DCIS) from breast atypia (abnormal cells that are associated with a higher risk for breast cancer), which is the most difficult challenge of breast cancer diagnostics, according to Dr. Joann Elmore.

"It is critical to get a correct diagnosis from the beginning so that we can guide patients to the most effective treatments," Elmore, the study's senior author and a professor of medicine at the David Geffen School of Medicine at UCLA said in a statement, adding that "medical images of breast biopsies contain a great deal of complex data and interpreting them can be very subjective. Distinguishing breast atypia from ductal carcinoma in situ is important clinically but very challenging for pathologists. Sometimes, doctors do not even agree with their previous diagnosis when they are shown the same case a year later."

Ad Statistics

Times Displayed: 345944

Times Visited: 21062 MIT labs, experts in Multi-Vendor component level repair of: MRI Coils, RF amplifiers, Gradient Amplifiers Contrast Media Injectors. System repairs, sub-assembly repairs, component level repairs, refurbish/calibrate. info@mitlabsusa.com/+1 (305) 470-8013

The premise of the solution is based on a 2015 study led by Elmore, which found frequent disagreements among pathologists in the interpretation of breast biopsies. Diagnostic errors were made in about one of every six cases of women with DCIS, while incorrect diagnoses were handed out for about half of the biopsies of atypia. By operating on the power of AI, the solution is expected to provide more accurate readings consistently by relying on large data sets to recognize patterns associated with cancer, ones that are difficult for humans to see.

Researchers trained the system with 240 breast biopsy images to recognize patterns related to several types of breast lesions. These ranged from benign and atypia to DCIS and invasive cancers, with the correct diagnoses for each image separately determined by a consensus of three expert pathologists.

The readings of the solution were then compared to those of 87 U.S. pathologists, with AI outperforming the physicians in distinguishing DCIS from atypia. Its sensitivity registered between 0.88 and 0.89, while the pathologists’ average was 0.70. It also came close to performing as well as the doctors in differentiating cancer from non-cancer cases.

"These results are very encouraging," said Elmore. "There is low accuracy among practicing pathologists in the U.S. when it comes to the diagnosis of atypia and ductal carcinoma in situ, and the computer-based automated approach shows great promise."

The study was supported by the National Cancer Institute of the National Institutes of Health. Other research partners aside from UCLA included Seattle Children’s Hospital; the University of Washington; Southern Ohio Pathology Consultants; and the University of Vermont.

The findings were published in the journal,

JAMA Network Open.