by

John R. Fischer, Senior Reporter | April 13, 2023

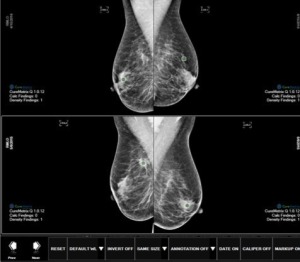

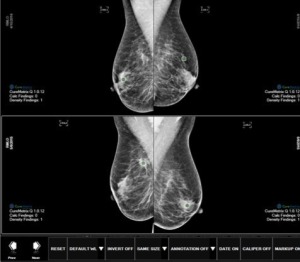

A new approach trains deep learning models on less data to be as accurate as radiologists in assessing breast density.

Despite being faced with limited data sets, British researchers have found a way to train deep learning models to accurately estimate breast density and apply the findings to cancer risk prediction and image segmentation.

Instead of building their own model, the group used two independent deep learning models initially trained on ImageNet, a nonmedical imaging data set with over a million images, to create a framework that used an approach called transfer learning to train models more efficiently with less medical imaging data, and outlined their findings in a study,

"Automatic assessment of mammographic density using a deep transfer learning method."

The aim was to create a solution with the same accuracy as experienced radiologists, whose interpretations of breast density play a crucial role in breast cancer risk assessment, according to professor Susan Astley, chair of the division of Informatics, Imaging and Data Sciences at the University of Manchester.

Ad Statistics

Times Displayed: 486

Times Visited: 1 Stay up to date with the latest training to fix, troubleshoot, and maintain your critical care devices. GE HealthCare offers multiple training formats to empower teams and expand knowledge, saving you time and money.

“The advantage of the deep learning-based approach is that it enables automatic feature extraction from the data itself. This is appealing for breast density estimations since we do not completely understand why subjective expert judgments outperform other methods,” she said in a statement.

Astley and her colleagues used nearly 160,000 full-field digital mammograms from 39,357 women that had been marked by radiologists, advanced practitioner radiologists or breast physicians with assigned density values on a visual analogue scale.

They preprocessed the images to make training computationally less intensive and conserve memory, and extracted features from the scans with deep learning models before mapping them out, based on a set of density scores. They then combined the scores with an ensemble approach for a final density estimate.

“The model’s performance is comparable to those of human experts within the bounds of uncertainty,” says Astley.

The findings were published in the

Journal of Medical Imaging.