by

John R. Fischer, Senior Reporter | August 29, 2018

Researchers have developed a new AI

system that can detect tiny, hard-to-see

tumors on CT scans

The smaller the tumor, the harder it is to see with human eyes.

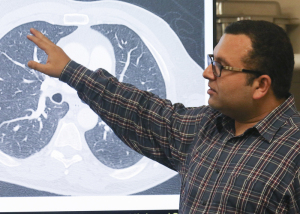

Researchers at the University of Central Florida’s Computer Vision Research Center are looking to solve this predicament with the development of a new AI system that can detect tiny masses of lung cancer in CT scans.

“Conventional technology relies on computationally expensive multi-stage frameworks and normally fails to capture these small tumors,” Naji Khosravan, a computer vision research scientist at Netflix and a Ph.D. student at the center, who is helping to develop the solution, told HCB News. “Our formulation enables our system to efficiently capture these hard-to-see tumors in one shot and with high accuracy.”

Ad Statistics

Times Displayed: 6173

Times Visited: 20 Brand-New FDA-cleared Advanced Ultrasound Medical Device available for sale or lease to Wound Care Centers or any other Medical Facilities.The Arobella 1000D is designed for non-contact or debridement ultrasound wound healing therapy, or any other wounds

The system searches for patterns in CT scanners and teaches itself how to locate tiny tumors, utilizing an approach similar to algorithms used in facial-recognition software to scan thousands of faces for a particular pattern to find a match. The technique is modeled after the brain, where neuronal connections with one another strengthen as it develops and learns.

Researchers commenced the learning process by feeding the software they developed for the computer more than 1,000 CT scans provided by the National Institutes of Health through a collaboration with the Mayo Clinic.

Graduate students like Khosravan are tasked with teaching the computer different things, his being to create the backbone of the system of learning. His colleague, Sarfaraz Hussein, is teaching it to differentiate cancerous from benign tumors while another, Rodney LaLonde, is showing it how to focus on lung tissue and ignore other types of tissues, nerves and masses that appear on CT scans.

Current testing results show a 95 percent accuracy rate with the system, compared to 65 percent with human eyes.

“Efficiency while maintaining accuracy is the key toward this transition,” Khosravan said. “We believe this is a big step toward helping radiologists and the feasibility of moving AI research to actual use in daily practice in clinics to save lives.”

Leading the group is engineering assistant professor Ulas Bagci.

An additional colleague, Harish Ravi Parkash, is applying the findings of this project to the design of another system for identifying or predicting brain disorders.

The group plans to unveil its findings in September at the MICCAI 2018 conference in Spain, and from there, hopes to incorporate their project into a hospital setting. They are currently looking for partners to accomplish this, and if successful, aim to release the technology onto the market within one or two years.

Back to HCB News